AI Transformation in Telco – Operating Model or Technology?

SAKET BIVALKAR

Saket Bivalkar is the Managing Partner of Versatile Consulting, a boutique consultancy that designs operating models for multinationals. His work focuses on building models that hold across geographies while allowing for local adaptation. Recent engagements include 63-country and 42-country operating model transformations in regulated industries.

He is based in Spain and can be reached at saket@versatile.consulting.

AI Transformation in Telco: What the CTO Owns and What the Operating Model Must Solve First

Every telco AI operating model failure follows the same pattern. A capable team deploys a model into production. The model performs well in the environment where engineers trained it. Within three to six months, adoption stalls, the business quietly deprioritises the use case, and the programme gets relabelled a “pilot.” Nobody calls it a failure. It just stops moving.

The standard explanation is that the technology was not ready, or that the team underinvested in change management, or that the use case was too ambitious. These are plausible stories. They are usually wrong.

The more accurate explanation is that the organisation was not designed to use what it built. The model ran into a fragmented telco AI operating model. The fragmented operating model won.

Why the telco AI operating model is built for a different era?

Telecommunications companies are structurally complex in a way that most industries are not. They run multiple networks, across multiple geographies, under multiple regulatory regimes, with product portfolios spanning consumer, enterprise, and wholesale. The org chart that manages this complexity was not designed for speed. Instead, it was designed for control, redundancy, and risk containment.

That design made sense when the primary risk was service outage. It does not make sense when the primary risk is falling three years behind a more agile competitor who figured out how to deploy AI at scale.

As a result, telcos end up with an operating model that is functionally siloed at exactly the level where AI creates value: the point where data from one domain, such as network performance, needs to inform a decision in another domain, such as customer churn, pricing, or field dispatch. Most telco architectures move that data slowly, through governance processes built to prevent errors, not to enable inference.

Deploying AI models into this architecture does not simplify it. In fact, it adds a new layer of complexity on top of a structure that was already struggling to coordinate.

What the CTO must own in a telco AI transformation

The CTO is the right sponsor for AI in telco. Not because AI is a technology problem, but because the CTO is one of the few executives with cross-domain visibility: network, IT, product engineering, data platforms, and increasingly, customer-facing systems.

That cross-domain visibility matters because the failure modes in telco AI are almost never technical. They are jurisdictional. A network optimisation model requiring clean, labelled data from five different operational domains will be blocked by whoever owns domain three and sees no reason to cooperate. Similarly, a predictive maintenance system that saves money in the network division while creating scheduling pressure in field services will face resistance from field services, regardless of the aggregate financial case.

The CTO cannot fix this by owning the technology layer alone. To drive real change, they must own the decision rights that sit above the technology layer.

That means the CTO needs to resolve four things before any significant AI deployment goes into production:

1. Who owns the data at the point of inference, not just at the point of collection?

Most telcos have reasonable data governance for regulatory and billing purposes. However, very few have clear ownership at the moment a model needs to act on that data in real time. This ambiguity produces delays, workarounds, and ultimately, models that run on stale or incomplete inputs.

2. Who is accountable when the model is wrong?

This is not a hypothetical. A churn prediction model that incorrectly flags high-value customers for aggressive retention spend costs real money. Moreover, a network anomaly model that generates false positives at 2am creates real operational burden. Settling accountability before deployment changes how carefully engineers build the model, how well they calibrate thresholds, and whether the business actually trusts the output.

3. How does the model’s output enter a workflow?

A model that produces a score, a ranking, or a recommendation is not useful until someone connects that output to an action. In fragmented telco organisations, that connection is often manual, ad hoc, or blocked by a system outside the AI team’s control. Therefore, the CTO must treat workflow integration as part of the deployment itself, not as a follow-on task for someone else to figure out.

4. What does success look like at 12 months, not at demo day?

Telco AI programmes are often measured at the point of technical delivery. The model is live, the infrastructure is running, and the accuracy metrics look reasonable. Yet twelve months later, adoption among intended users sits at 20%, the business outcome has not moved, and the programme is winding down quietly. Consequently, the CTO needs a 12-month accountability structure from day one, covering usage metrics, decision outcomes, and a named owner responsible for both.

Three operating model problems that block telco AI at scale

1. Accountability without authority

Telcos regularly create AI or digital transformation roles without giving those roles meaningful authority over the domains that need to change. A Chief AI Officer who does not control hiring in data engineering, budgets in IT, or priorities in product is a communications function, not a transformation function.

The operating model must specify, in writing, what the AI function can approve, what it can block, and what it escalates to the C-suite. Without that specification, every significant AI initiative becomes a negotiation. Negotiations with fragmented domains at scale produce the slowest possible outcome.

2. Shared data treated as an afterthought

Telco AI at scale requires shared data assets: unified customer profiles, network event logs correlated across domains, and field service records linked to network incidents. These assets do not exist by default. Someone has to build, govern, and fund them as shared infrastructure.

The critical operating model question is: who pays for shared data, and who decides what gets built? If each domain funds its own data and contributes to a central pool only when convenient, that pool will always be underfunded. As a result, the AI use cases depending on it will always underperform. The CTO needs to make the economic case for shared data investment at board level, and the operating model must reflect that investment with clear governance.

3. AI deployment treated as a project, not a capability

Most telco AI programmes run as projects: a defined scope, a timeline, a budget, and a completion date. This structure suits building a billing system. It does not suit deploying AI, because AI requires ongoing measurement, model retraining, threshold adjustment, and workflow iteration. The day the project closes is often the day the model starts degrading.

Instead, the operating model needs a permanent AI operations function with the budget, the mandate, and the talent to maintain what gets built. Not a centre of excellence offering advisory support, but an operational team with direct responsibility for model performance in production.

How to sequence your AI portfolio: Run, Grow, Transform

Before fixing the operating model, the CTO needs a portfolio view that makes dependencies explicit. Most telcos have AI initiatives scattered across the organisation with no shared prioritisation logic. A practical three-tier structure cuts through that:

Run — Stabilise and optimise AIOps for incident correlation, anomaly detection, predictive maintenance, and ticket triage. These use cases have clear data sources, measurable outcomes, and limited workflow complexity. They are the right place to prove repeatability and build internal trust in AI-driven decisions.

Grow — Revenue and customer experience Next-best-action, churn prevention, personalisation, and pricing optimisation. These use cases depend on cross-domain data and require commercial teams to act on model outputs. Consequently, they expose operating model gaps earlier and demand tighter accountability structures.

Transform — Operating model shifts Autonomous network operations, agentic customer care, and zero-touch provisioning. These use cases are not technology problems. They are redesign programmes. Attempting them before the Run and Grow layers are stable is the single most common reason telco AI ambitions stall at the board level.

The portfolio lens does one critical thing: it makes the upstream dependencies visible. AI value in telco often depends on process redesign, data capture investment, and policy changes that sit outside the AI team’s control. Mapping initiatives to the three tiers forces that conversation earlier.

What a strong telco AI operating model looks like in practice

The telcos making real progress on AI at scale share a small number of structural characteristics.

Four traits that separate leaders from laggards

First, they have a clearly defined AI transformation owner at C-suite level, with authority extending across network, IT, and commercial domains.

Second, they fund shared data infrastructure as a strategic asset, not a cost centre.

Third, they maintain a model operations capability that is separate from the delivery team that built the models.

Fourth, and perhaps most importantly, they use an explicit governance framework that maps AI decisions to business outcomes, not to technical metrics.

None of these are technology choices. They are operating model choices. The technology is secondary.

What to fix first: a sequenced priority stack

Most telcos try to scale AI before the prerequisites are in place. A more resilient sequence is:

Step 1: Establish the non-negotiables (weeks 0 to 6) Define the reference architecture for AI services, data products, and integration patterns. Set the security baseline for data access, model artefacts, and GenAI usage. Produce a shortlist of the top ten use cases with explicit value hypotheses and dependency maps.

Step 2: Build the two foundations that unblock everything else (weeks 6 to 16) First, build foundational data products: a normalised alarm and event model, and a unified customer interaction timeline. These two assets alone unblock a disproportionate number of downstream use cases. Second, build a deployment path — CI/CD, model registry, and monitoring — that can pass an internal governance audit.

Step 3: Prove repeatability with two or three industrialised use cases (quarter two) Choose use cases that demonstrate end-to-end delivery and operational adoption. AIOps for incident correlation with measurable MTTR reduction. Care agent assist that reduces average handle time. Predictive maintenance that reduces truck rolls. The objective is not a compelling demo. It is a repeatable delivery system: the second use case should be materially cheaper and faster than the first.

How to measure whether the telco AI operating model is working

Track metrics that prove the operating model is functioning, not just that models exist.

Delivery performance: lead time from approved use case to production; release frequency; change failure rate for AI services.

Data reliability: percentage of critical data products meeting quality SLAs; mean time to resolve data incidents.

Model operations: model SLO compliance; drift detection time; rollback frequency; alert-to-action time.

Business outcomes: MTTR reduction; churn rate; cost-to-serve; truck roll reduction; NPS movement.

The most important discipline is ensuring every scaled use case has a named owner, a runbook, and an adoption mechanism. If the business cannot operate it without the AI team present, it is not done.

Diagnostic: how ready is your telco AI operating model?

Score your organisation before you read the next section. For each statement below, give yourself 1 point if it does NOT apply to your organisation today. A score of 5 means your operating model is ready to scale. A score of 0 means the operating model conversation needs to happen before the next deployment decision.

Statement 1. Our AI programme has a named business accountability owner above the use-case level, not just a technical owner.

Statement 2. Data sharing between domains operates under a standing governance framework, not case-by-case negotiation.

Statement 3. Our AI use cases are tracked against adoption and business outcomes at 6 and 12 months, not just at delivery.

Statement 4. Our AI governance body makes prioritisation and risk decisions. It does not only advise.

Statement 5. We have more AI models in active, measured production use than pilots marked “complete” but no longer running.

What your score means:

5/5 — Operating model ready. Your foundations are in place. The constraint is likely execution speed or use-case selection.

3 to 4 — Partially ready. You have some foundations but specific gaps will block scale. Identify which statements scored 0 and treat them as pre-conditions for the next deployment wave.

1 to 2 — Operating model work needed first. Deploying more AI into this structure will multiply complexity, not capability. The operating model redesign needs to run in parallel with, or ahead of, the next AI initiative.

0 — Stop and redesign. Every AI initiative in the pipeline is at risk. The bottleneck is not technology. It is jurisdiction, data ownership, and accountability structure.

The Versatile Consulting approach

At Versatile Consulting, we work with senior leadership at multinationals to design operating models that can absorb AI at scale. Specifically, this means defining the governance structures, accountability frameworks, and data ownership models that sit underneath the technology decisions.

For telco specifically, we have developed a diagnostic methodology that maps the current telco AI operating model against the requirements of the AI use cases in the pipeline. It identifies the specific gaps that will block deployment and produces a sequenced plan for closing them.

If this resonates with the situation your organisation is in, we would be glad to have a direct conversation.

Contact us or connect with Saket Bivalkar directly on LinkedIn.

Versatile Consulting is a boutique consultancy specialising in operating model design for multinationals and end-to-end AI transformation. We work with C-suite leaders across financial services, telecommunications, life sciences, and energy.

FAQs

Why do most telecom AI transformation programmes stall after the pilot stage?

Most telco AI pilots stall because the operating model was never redesigned to absorb the model’s output. The technology performs as expected in a controlled environment, but when it reaches production it runs into fragmented data ownership, unclear accountability, and workflows that nobody gave the AI team authority to change. The model produces a recommendation. Nobody is mandated to act on it. The programme gets relabelled a pilot and quietly deprioritised.

What should a telco CTO own in an AI transformation that they typically do not?

A telecom CTO typically owns the technology layer: infrastructure, platforms, vendor relationships, and model delivery. What they rarely own, but need to, are the decision rights above the technology layer. That means defining who is accountable when a model is wrong, who controls data at the point of inference across domains, and how model outputs connect to operational workflows. Without authority over these questions, the CTO can deliver working models into an organisation that is not structured to use them.

How does a fragmented operating model block AI deployment in telecoms?

Telco organisations are structured around control and redundancy, not cross-domain data flow. AI creates value precisely at the intersection of domains: network performance informing churn prediction, field service data informing maintenance scheduling. A fragmented operating model means that data sharing between those domains requires case-by-case negotiation, that accountability for outcomes sits with nobody in particular, and that any model requiring inputs from multiple domains will hit governance delays that erode its usefulness before it reaches scale.

What operating model changes does a telco need to make before deploying AI at scale?

Three changes matter most. First, shared data infrastructure needs to be funded and governed as a strategic asset, not assembled on a use-case-by-use-case basis. Second, the AI transformation function needs explicit decision rights over the domains it depends on, not just an advisory mandate. Third, AI deployment needs to be treated as a permanent operational capability with a funded team responsible for model performance in production, not as a project that closes when the model goes live.

How do the most successful telcos structure AI governance differently from the rest?

Telcos making measurable progress on AI at scale share a few structural traits. Their AI transformation owner sits at C-suite level with authority that crosses network, IT, and commercial domains. Their AI governance body makes decisions rather than issuing recommendations. And they have a distinct model operations function, separate from the delivery team, responsible for adoption rates, threshold calibration, and business outcomes at 12 months, not just technical accuracy at launch.

How do we know if our telco has an operating model problem rather than a technology problem?

If your AI programme has a technical owner but no business accountability owner above the use-case level, you have an operating model problem. If data sharing between domains requires a meeting and an approval process each time, you have an operating model problem. If your AI use cases are measured at delivery rather than at adoption and business outcome six months later, you have an operating model problem. The technology is rarely the constraint. The jurisdiction is.

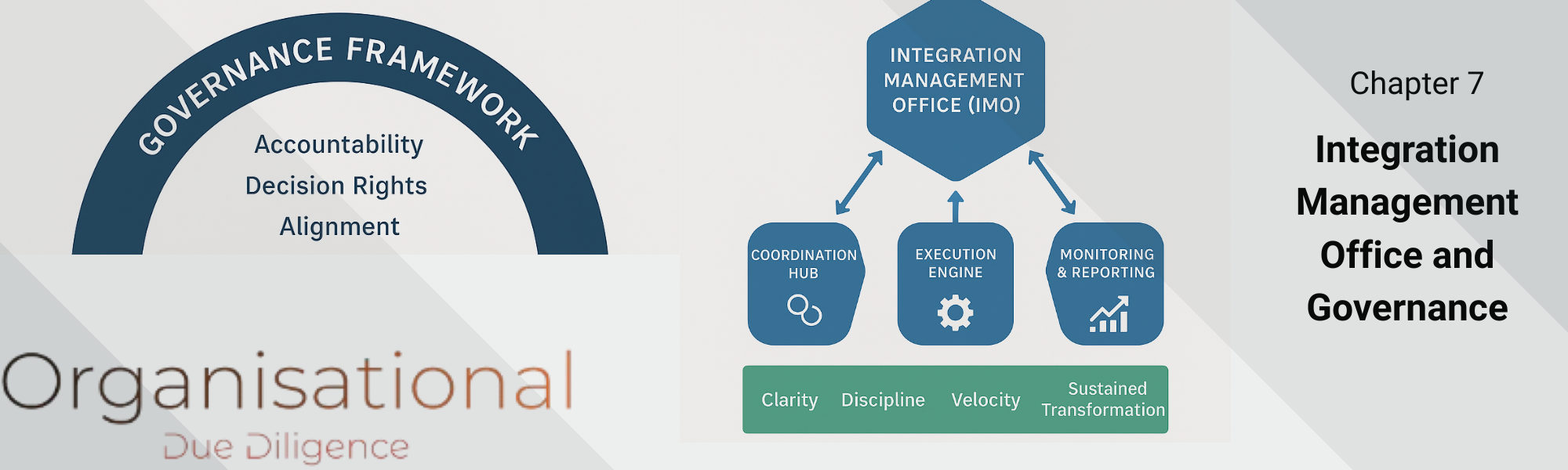

Integration Management Office and Governance

Discover how to set up an Integration Management Office (IMO) and governance framework to drive clarity, accountability, and success in post-merger integration using Organisational Due Diligence.

Integration Gap Identification and Prioritisation

After completing a deep discovery across structure, teams, culture, and leadership during the organisational due diligence, the next step is to synthesise the findings into actionable insights for the Integration or the transformation.

The Unseen Flaws in Organisations

Uncover unseen threats in operating models and leadership using lessons from Abraham Wald to build resilient, adaptive organizations.